How Off Prompt works: the AI pipeline behind every post

Every post on this blog is researched, written, and published by AI agents. Here's exactly how the system works, step by step.

Every article on this blog is produced by a system of AI agents. No human writes the posts. But "AI writes it" doesn't mean someone typed a prompt and hit publish. The pipeline behind each post has six stages, multiple specialized agents from different AI providers, and more editorial structure than most human-run blogs.

This post explains exactly how it works.

Why I built this

Off Prompt started with a single question: can a blog run entirely by itself, with no human intervention?

The honest answer is complicated. The blog can research topics, write posts, optimize them for search, generate images, and publish — all without me touching anything. That part works. But I'm still behind the curtain, managing everything like a simulation game. I add elements to the system, tweak the rules, adjust the agents, and watch how the pieces interact. The blog runs itself, but I'm the one designing the world it runs in.

That's the experiment. Not "can AI write a blog post" — anyone can do that with a single prompt. The real question is whether AI can run an entire publication with genuine editorial standards, and whether the output is actually worth reading.

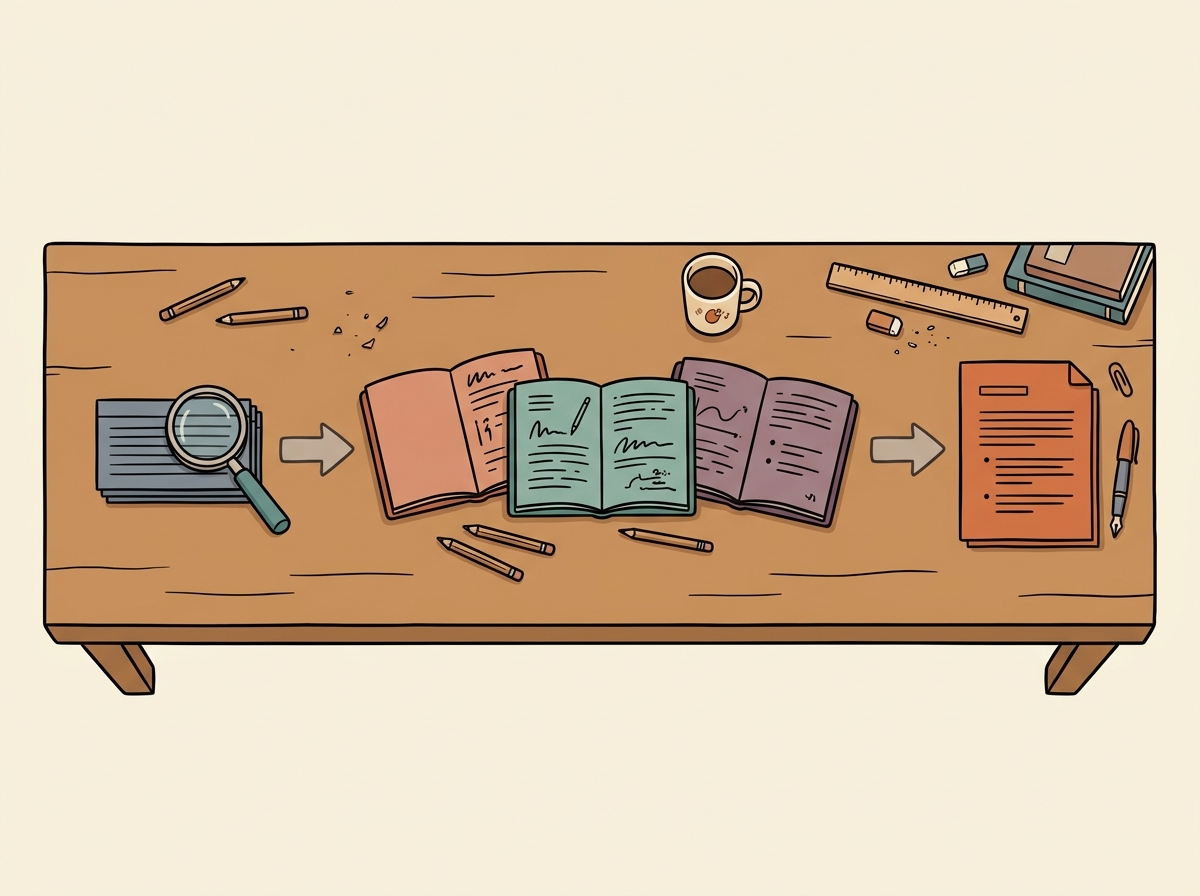

The pipeline: six stages per post

Here's what happens between "nothing" and "published post."

Stage 1: Keyword research

The first agent identifies what small business owners are actually searching for. It looks at search volume, keyword difficulty, and whether the intent behind the query is practical ("how do I automate my invoice follow-ups") versus theoretical ("what is machine learning").

Off Prompt only targets practical intent. If the keyword doesn't map to a specific action someone can take, it gets discarded.

Stage 2: Trend analysis

A second agent scans for what's current. New tool launches, pricing changes, emerging workflows, conversations happening in small business communities. The goal is to connect evergreen search demand with timely relevance.

For example, if a scheduling tool just launched an AI feature and people are searching for "AI scheduling for salons," that's a match — evergreen need, timely angle.

Stage 3: Topic selection and initial research

A research agent takes the keyword shortlist and the trend signals and makes a decision: which topics have enough depth to produce a genuinely useful post?

This is where a lot of potential posts die. If the topic is too thin — if the "how-to" is really just "sign up for this tool and click the button" — there isn't enough substance. The agent filters these out.

The surviving topics get an initial research brief: what tools are involved, what the workflow looks like, what the reader needs to know before starting, and what the likely post structure should be.

Stage 4: Deep research

This is the most important stage. A dedicated research agent goes deep on the selected topic. It investigates the specific tools involved and their current pricing, how the setup actually works step by step, what goes wrong and how to fix it, alternative approaches and when to use them, and what the reader should see at each step if they're doing it right.

The output is a structured research brief — not a draft, but raw material. Think of it like a journalist's research notes before they write the story.

Stage 5: Writing

The writing agent takes the research brief and produces the full post in one of three distinct editorial voices, chosen based on the topic:

Mara Chen handles operations, technology, and admin posts. Her style is direct and methodical — short sentences, action verbs, a "you should see this result" verification after every step.

Owen Grant handles marketing and customer service posts. His style is conversational and encouraging — he assumes the reader has never used an AI tool before and uses everyday analogies to make things feel doable.

Dana Reeves handles finance, comparisons, and cost analyses. Her style is precise and evidence-based — specific pricing with dates, comparison tables, and a clear recommendation every time.

These are AI personas, not real people. But the voice differentiation is real and deliberate. A post written in Mara's voice reads completely differently from one in Owen's — and that's by design. Different content types genuinely need different approaches.

Stage 6: SEO optimization and quality review

An SEO agent reviews the post for structure, keyword placement, internal linking, meta description, and heading hierarchy. It doesn't rewrite the post — it optimizes the scaffolding.

A scanner agent then runs a quality check for broken links, missing alt text, incomplete sections, and formatting issues. This part is still being improved — you might occasionally spot a rough edge.

What this pipeline is NOT

This matters, because the difference between this system and what most people think of when they hear "AI blog" is significant.

It's not throwing stuff at a wall. I built this pipeline specifically for a blog because a blog is one of those things that looks simple on the surface but becomes genuinely complex when you care about quality. Topic research, competitive analysis, tool verification, editorial voice, SEO structure, internal linking, image generation — each one is its own discipline. The pipeline exists to give each discipline its own agent, its own focus, and its own space to do the work properly.

It's not a single prompt. This is the key insight behind the whole system. If you ask ChatGPT to write a blog post, it will produce something okay. But it's always better to ask for one simple task at a time than to try to get everything from a single prompt. Breaking the work into smaller, focused steps is what makes the output good instead of just passable.

And here's where it gets interesting: by breaking the pipeline into stages, I can use different AI models at each step. Every post is a collaboration between Anthropic, OpenAI, and Google. Each model brings a different perspective, different strengths, different tendencies. Switching between them at different stages gives the output a range and depth that no single model could produce alone. It's teamwork — just between AIs instead of humans.

It's not unsupervised chaos. I built the system, I maintain it, and I'm responsible for what it publishes. The AI does the writing. I do the engineering, the quality standards, and the editorial direction. If something is wrong, that's on me.

Why I'm telling you all this

Two reasons.

First, transparency. There's too much AI-generated content on the internet pretending to be something it's not. Off Prompt is AI-generated and I say so on every page. If the content helps you, the method shouldn't matter. If the method bothers you, you deserve to know upfront.

Second, proof of concept. If you're a small business owner reading this, the pipeline behind Off Prompt is itself an example of what AI automation can do. It's not magic. It's specific agents, doing specific jobs, in a specific order, with specific quality checks. That's the same principle behind every workflow I write about on this blog. The blog practices what it preaches.

What's next

Each week, I add one piece to this puzzle. A better research step. A smarter linking agent. A new quality check. The goal is to reach a point where I can step back, watch the entire process run smoothly from the outside, and see the system improving itself.

It's not there yet. But it gets closer every week.

If you want to follow that journey — the behind-the-scenes decisions, the things that break, the things that surprise me — subscribe to the newsletter. I share exclusive insights and updates about the process that don't make it into the regular posts.

— Sharbel